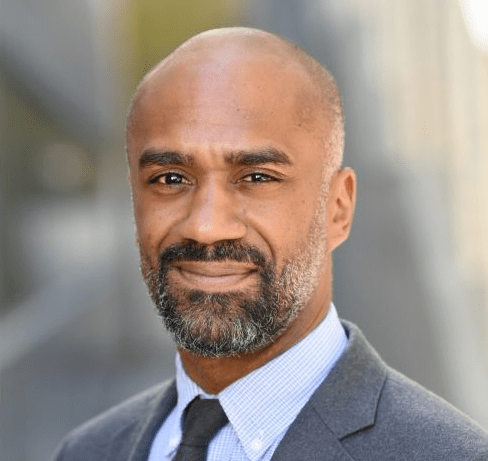

I’ve been studying Big Tech for a long time. What just happened with Anthropic and the Pentagon terrifies me

I spent two years at the Federal Trade Commission, watching regulators doing their best to keep pace with Silicon Valley. I thought I had seen the outer limits of how distorted Big Tech’s command over government policy could be; I did not think it could get worse. I was wrong.

Last month, Anthropic refused to allow the Defense Department to use Claude, the company’s popular flagship family of AI assistants, for domestic mass surveillance and lethal autonomous warfare. In response, the government canceled its $200 million contract with Anthropic. According to the Department, the company’s constraints would undercut its ability to defend the country from real threats.

The Pentagon designated the company a “supply-chain risk” because, according to Secretary of Defense Pete Hegseth, the company’s “woke” approach threatens national security. This move will not significantly impact Anthropic because most of the company’s business comes from nongovernmental partners and clients. But it matters; the company stands to lose hundreds of millions of dollars.

Anthropic has accordingly sued. As it should. It alleges that, among other things, the Department’s designation is based on the company’s protected views about AI safety. This, it argues, violates the First Amendment. A court has so far upheld the designation while briefing continues.

For year, tech companies have invoked the First Amendment and other laws to shield themselves from legal accountability. In this case, Anthropic does the opposite: it argues that the First Amendment allows it to make decisions that safeguard the public, against the government’s wishes.

None of this is normal — and I say that as someone whose job at the FTC and as a law professor is to define what normal government oversight of these companies looks like. In ordinary times, we would expect government procurement officials to insist that private contractors implement technical safeguards in their products, just as policymakers require car manufacturers to implement safety features like seatbelts, airbags, and rearview cameras. For decades, regulators and judges have also successfully forced pharmaceutical manufacturers to attend to the addictiveness of opioids and other pain relief medicines.

Then again, these are not normal times. Tech companies have an outsized impact on public policy. The administration enlists tech firms like Palantir to spy on residents in unprecedented ways. It asked a tech billionaire to take a chainsaw to the administrative state while his young acolytes hoovered up unknown amounts of personal data about U.S. residents with impunity.

Even Anthropic has assumed a lot about its freedom and authority, having just announced a new tool called Mythos, an AI model that finds security vulnerabilities in software and networked resources. At the FTC, we asked that question about every powerful platform that came before us. We rarely got a satisfying answer. I don’t expect one here either.

It is a sign of the times that a military tech contractor’s moral compass is our policy lodestar.

This didn’t happen overnight.And it wasn’t inevitable. But it was foretold. In the 1990s, when the commercial internet was new, an influential cadre of well-meaning Silicon Valley insiders argued that internet entrepreneurs should be free to develop the new “technologies of freedom,” unfettered by law.

John Perry Barlow, the self-styled prophet of the information age, penned perhaps the most recognizable expression of the point in A Declaration of the Independence of Cyberspace*. In it, he stridently protested a 1996 federal law that would have blocked kids’ access to online porn. The document, however, said so much more. Above all, it argued that the internet would organize public life in ways that were better than anything government could offer.

The ideas that Barlow’s manifesto expressed prevailed in courts and Congress immediately. In 1997, the Supreme Court pronounced broad online free speech rights for users when it struck down the very anti-porn legislation that drew Barlow’s ire. The Court has since expanded this conception of anti-government free speech norms to apply to online companies. This is largely why the most powerful companies today reflexively invoke the First Amendment to shield their products from legal scrutiny.

In 1996, Congress also passed a handful of laws that gifted tech companies exceptional legal protections, the most notable of which blocks legal challenges to companies that host user-generated content. This statute, colloquially called Section 230 for its place in the Communications Act, rests on the conceit that, free from the threat of litigation, platforms would do good and attend to user preferences far better than regulators ever could. At the FTC, we lived with the consequences of that conceit every day.

Judges have since cited the law to dismiss cases against search engines, dating apps, and review sites because those companies presumably promote user-generated content. It has not mattered that the information they knowingly host is unlawful or offensive.

This statutory protection, however, has morphed into far more than a shield for hosting user-generated content. Courts have also relied on it to block cases involving platforms that solicit compromising deepfakes of young women, match violent terrorists, and enable the sale of unregistered weapons to domestic abusers. The courts have reasoned that, in all of these circumstances, the companies are mere platforms of for user-generated content.

Today, moreover, the White House is Big Tech’s most powerful friend and lobbyist. Recall how Silicon Valley’s CEO celebrities stood right behind the President on Inauguration Day in January 2025, preening over the crowd before them. Since, the administration has led the charge to advance their interests. It, for example, has argued for moratoria on state-level AI regulation. (It now seems to be scrambling and waffling given the public outcry.) It also wants to reverse the EU’s regulation of competition and commercial surveillance practices.

Given all of this, it is not surprising that, today, tech companies could presume to preside over public life as they do.

The U.S. has always revered its captains of industry. Bell and Ford earned admiration for their entrepreneurial acumen and foresight. But companies like Standard Oil, even at the height of its power, did not have a direct line to the Oval Office, a seat at the inauguration, and an AI model capable of finding security vulnerabilities in critical infrastructure.

There surely is something to admire in Anthropic’s decision to hold the line against the Defense Department. But in a democracy, where voters are sovereign, this state of affairs is upside down. Why should we trust Anthropic now when, tomorrow, it could change its mind about mass surveillance or automated weaponry? Or Mythos? I have watched enough companies change their minds when the financial incentives shifted. Principles have a way of bending under billion-dollar pressure.

In some regards, the tide may finally be turning against Big Tech. Just a few weeks ago, two juries, one in Los Angeles and the other in Santa Fe, held Meta and YouTube accountable for the ways in which they design social media to hold consumer attention irrespective of users’ mental health effects. The companies would do better to implement technical safeguards in their products before releasing them to the public.

Red and blue states and localities are pushing back against the development of data centers in their communities because of concerns over high energy usage and water consumption, among other things.

I have spent my career arguing that government can and must do this job. The Anthropic moment is not a reason for optimism — it is a warning. If Congress won’t act, voters need to put people in office who will. Because the alternative is a future where a private AI company’s terms of service are the closest thing we have to a constitution for the digital age. That should terrify all of us.

The opinions expressed in Fortune.com commentary pieces are solely the views of their authors and do not necessarily reflect the opinions and beliefs of Fortune.

This story was originally featured on Fortune.com